We have a batch process that runs every morning, processes a bunch of data gathered out of our central e-commerce database and publishes messages on a queue for another service to pick up for further processing. This batch process takes about 30 odd minutes to finish which is not at all bad since our time budget allows for that run time.

We chose to implement the batch process in an unconventional way, we created a ASP.NET Core WebAPI and exposed a REST fire-and-forget endpoint that can be invoked either manually or on a schedule. Behind this endpoint we executed our workflow by offloading the execution to a background ThreadPool thread using Task.Run. This way the REST endpoint can return quickly without blocking while the background thread continues with its job.

| [HttpPost("api/workflow/invoke")] | |

| public IActionResult Invoke() | |

| { | |

| Task.Run(async ()=> await useCase.StartBackgroundWork()); | |

| return Accepted(); | |

| } |

Disclaimer: There are other ways to build long running batch applications on Windows for e.g. Windows Service using TopShelf, Hangfire etc but Windows Services cannot be invoked on demand which is something we needed, to be able to invoke a retry, for instance, following an outage. BackgroundService is another good model to use if you want to run long running jobs as a part of your ASP.NET Core application., however, some of the downsides with state management will still apply and internally it also uses a Task.Run.

One BIG downside to building this kind of batch systems is that if the app’s process dies, all the transient in-process state will be lost and because it was transient there would be no way to “resume” the operation after failure. You’ll just have to start the whole thing over!

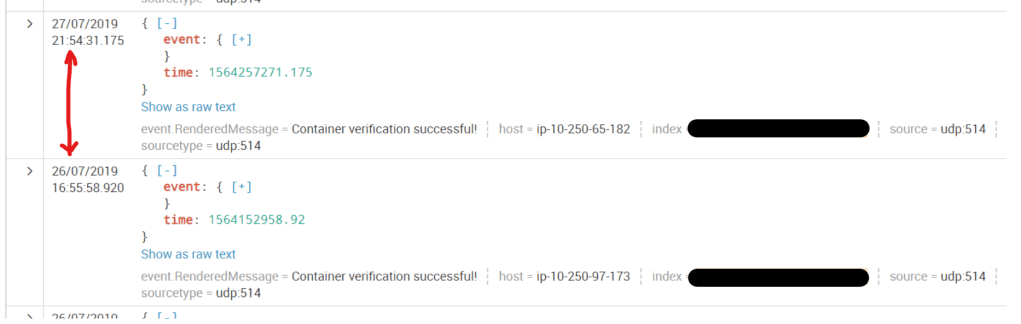

This model worked very well for nearly a year in production but then one morning, our monitoring showed that our message output was a third of what it was supposed to be. We dug into our logs to find out what happened and after several hours of poring over the logs, we noticed that up until certain point things were progressing normally and we were seeing all the expected events in the log. But then suddenly we started seeing log messages that we only expect to see at process start-up. Then it hit me! Part way through, somehow the process got restarted..but how? and why?

Further digging revealed that the time interval between 2 consecutive start-up log messages was around 29 hours.

This had never affected us before because it had always somehow happened outside of our critical run time window. A quick internet search revealed that this is by design in IIS. IIS simply recycles the app pool in which your application runs, every 29 hours! Why 29 hours? Because its a non-resonate pattern which doesn’t have a predictable occurrence pattern due to being a prime number? Not sure! This was done to ensure that IIS regularly cleans house and not leak resources. Because web services aren’t usually used to build long running background processes, these recycles had never affected any of the web applications/services that I had seen or built. This kinda let this feature of IIS get buried deep within the sub-conscious almost to the point of forgetting.

Anyway, mystery of process restarts solved! However, how did we mitigate this? We evaluated 3 broad ways to solve/mitigate this:

A) Simply add pessimistic recurring invocations after the original invocation. For this to work, we needed to make sure that the process was idempotent because each invocation will basically re-run the whole use case and publish all the messages again.

Idempotency is always a good idea in architectures decoupled by message queues.

B) Change the applicationHost.config on the server where the service is hosted, to modify the recycling interval or set a specific out of hours time.

The below script changes the schedule to 9:10 PM

C:\Windows\System32\inetsrv

λ appcmd.exe set config -section:system.applicationHost/applicationPools /+"[name='{name of your target app pool}'].recycling.periodicRestart.schedule.[value='21:10:00']" /commit:apphost

This is a little difficult in our organisation because almost all our AWS cloud infrastructure is immutable and provisioned in a standardised and scripted way. The definite upshot of this is that, all the infrastructure is created identically to eliminate environment variances and increase its reliability. By tweaking specific apps or environments, it would have made the operations’ job more difficult because they’d have to give our application special treatment, creating snowflakes. This is contrary to the idea of immutable infrastructure i.e. you never alter/modify/change the existing infrastructure but you create a new one with the desired configuration using set scripts in a predictable way and tear the old one down.

C) Last but not the least, convert the batch driven system into an event driven system that relies on message queues to buffer data before processing. This meant that even if the process was restarted, it will pick up where it left off and keep going without missing a beat. Because data is buffered in queues, no state will be lost. This represented a big change and therefore a big risk, to implement straight away. We’d have had to break up the service quite a bit to achieve it.

We settled for easiest option, the pessimistic option A i.e. 3 more recurring invocations (executed by a CRON triggered Lambda) after the initial invocation, spaced 15 minutes apart. This more or less “eliminated” this particular problem since we put it in place around 6 months ago. Pragmatism around this solution helped us get the most bang for the buck and resolve the problem quickly while still keeping more sophisticated architectural solutions open for the future.